- within Technology topic(s)

- within Technology, Wealth Management and Employment and HR topic(s)

- with Senior Company Executives, HR and Inhouse Counsel

We continue our analysis of the second set of Specialised Technical Guides (Guides 3 to 15) issued by the Spanish Artificial Intelligence Supervisory Agency (AESIA) to support compliance with the European Artificial Intelligence Act (AI Act).

On this occasion we take a look at the key aspects of Guides 11 and 12, which address cibersecurity and logging:

Guide 11: "Cibersecurity"

Guide 11, entitled "Cybersecurity", develops the measures that ought to be implemented in AI systems to mitigate the risks and attacks they may face throughout their lifecycle.

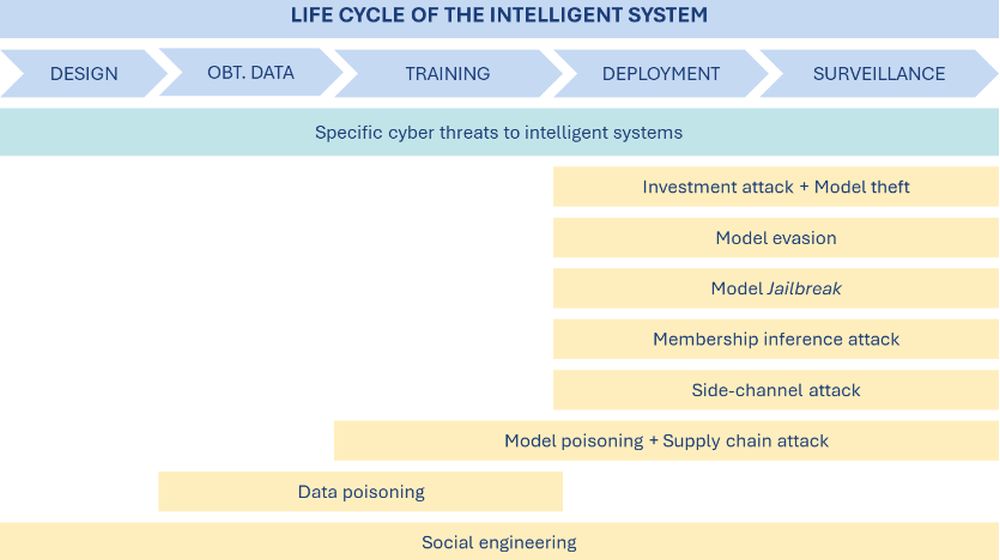

The Guide stresses that, to effectively implement these measures, it is essential to first identify the types of potential threats and attacks that exist. To this end, it provides an outline that connects the different phases of a system's lifecycle to the possible attacks in each of them:

Illustration. 'Life cycle of the intelligent system'. Source: Guide 11

This approach offers providers and deployers greater awareness of potential risks and the ability to adjust safeguards accordingly. The main cybersecurity measures are grouped into the following blocks:

1. Organisational cybersecurity measures, which must be guaranteed and maintained throughout the system's lifecycle:

- The provider shall take actions including: plan, on a global level, the degree of cybersecurity applied to the AI system during the design and development process; involve the Data Protection Officer from inception of the AI system; accompany the instructions for use of the AI system with high-level recommendations on cybersecurity applied to AI; designate persons responsible for monitoring; if the AI system is delivered to the deployer in an on-premise or in-cloud format managed by the deployer, the provider must provide adequate instructions to protect it.

- Organisational measures should be aligned with technical measures. Thus, during the system's installation and/or configuration processes and in the instruction manual, the provider must include information regarding all system-specific cybersecurity risks and how it is protected. In addition, tools should be implemented throughout the lifecycle to automate security testing and ensure that updates maintain a consistent and non-degraded level of cybersecurity.

2. Measures to bolster system resilience against unauthorised attempts to alter its use, output or operation. These measures should be supported by inventories of system assets and actors throughout the system's lifecycle. These measures include, but are not limited to, the following:

- The supplier must implement measures including: inventory of all the actors involved in the process; establish access and permission levels for each of them; definition of the roles involved in the use of the tool; planning and performance of an inventory of assets including tools, data, processes and models.

From the perspective of technical support, these inventories must have adequate IT systems, mechanisms that allow application of the access policies and a centralised, updated and accessible documentary system. - The person responsible for deployment should be aware of the actors and assets that are applicable to them. At an organisational level, it should integrate the supplier's documentation with its internal organisational chart and, where the system is its own asset, treat it as such for cybersecurity purposes.

3. Measures to identify and mitigate vulnerabilities associated with training data, which should establish security controls to prevent tampering or manipulation:

- The provider shall, among other measures, implement security controls depending on the vulnerability identified. For example, expanding datasets through augmentation techniques when they are insufficient or establishing appropriate access control policies if vulnerabilities are detected in permissions management.

- In the case of the deployer, its primary action on an organisational level to protect systems against poisoning attacks will be to read and understand the instruction manual.

4. Measures to safeguard against adversarial attacks, via specific security controls:

- The provider shall, for example, avoid the use of widely known models where there is a risk of adversarial transferability, and integrate specific AI system security into its awareness strategies.

- The deployer should be aware of and analyse all vulnerabilities and controls that fall under its responsibility and allocate human and technical resources to mitigate them.

5. Measures against attacks aimed at discovering and exploiting AI system flaws, whether intrinsic to the model or arising from its integration into the software environment:

- The supplier shall identify and inventory the defects of the selected model, document them in the threat model and implement measures to mitigate their exploitation.

- The deployer shall understand the manual developed by the provider on intrinsic system defects and the configuration mechanisms applicable to the AI system within the scope of the intended purpose.

Guide 12: "Automatically generated records and log files"

Guide 12, entitled "Automatically generated records and log files", details the measures that must be implemented by providers and deployers to comply with the requirements of the AI Act in relation to the generation and keeping of logs in AI systems.

The Guide stresses the importance of adhering to the following core principles for effective records management in AI systems: confidentiality, integrity, availability, authenticity, accessibility and traceability, accountability, and retention and deletion practices. It also identifies several key considerations, including:

- The type of actor responsible for record-keeping should be taken into account. Providers/deployers should be responsible for retaining system-generated records provided that they are under their control for at least six months, unless otherwise provided for in applicable Union or national law. In the case of financial institutions, records shall be retained as part of their mandatory documentation obligations.

- Logs must reflect the information identified as necessary following the assessment process.

- Logs for remote biometric identification systems must include at least the following specific elements: the period of each system use (the start date and time and the end date and time of each use); the reference database against which the system has matched the input data; the input data with which the search has produced a match; and the identification of the natural persons involved in verifying the results.

However, the following processes must be addressed in a scaled manner to ensure that the logs are developed and managed properly:

1. Log assessment and design: this process involves analysing and determining the need to generate the log, defining the specific objectives for generating the log and establishing a scope. In this phase, the log is designed by identifying fields and categories to collect information. Within the process of assessing and designing the logs, it is necessary to: identify the need, identify the objectives, define the scope, design the log and identify those responsible for it. This process should be supported by:

- The risk-management measures set out in Guide 5 – it will be determined from the inventory which events should be logged.

- The post-market monitoring framework described in Guide 13, which identifies the information required once the system has been placed on the market.

- The human oversight measures in Guide 6, to identify the required information that the system should provide for this purpose.

This process should be reviewed on an ongoing basis, especially where changes to the system affect the underlying risk analysis.

2. Capture, storage and access control: this involves capturing, storing and retaining the records defined in the assessment and design phase to ensure that protection against unauthorised access, alteration, loss or destruction. To this end, records should be stored in a manner that ensures protection against the above, including: collecting information in accordance with the criteria set out in step (i); selecting appropriate storage media and protection materials; implementing appropriate cybersecurity and access control measures; developing and defining roles and responsibilities for risk management; etc.

3. Log retention and deletion: this process establishes the requirements for retaining and deletion of logs that have been created, captured and stored. This is determined by two factors: on the one hand, the need for retaining the records identified in process (i); and, on the other hand, taking into account applicable regulatory requirements (for example, the Spanish Law on Data Protection and the Guarantee of Digital Rights and the GDPR if the logs include personal data).

Deletion of a log must be authorised and documented and must, at all times, comply with the security and access measures that have been implemented. Logs subject to legal proceedings must not be deleted until appropriate authorisation has been obtained.

4. Monitoring and continuous improvement: the objective is to ensure and improve the quality and effectiveness of the risk management system; it is also necessary to monitor and establish specific periods for reviewing and updating the log management system. The following phases should be established as part of the process: monitoring and identification of potential errors, analysis of the logged data, implementation of improvements, evaluation of the improvements, and continuous improvement cycle.

Finally, the Guide highlights the importance of establishing responsibilities and authorisations for each of the above processes. Responsibilities should be assigned to all personnel involved in any of the processes and should be reflected and documented in job descriptions and equivalent documentation where appropriate. All responsibilities must be documented and set out in written form.

The content of this article is intended to provide a general guide to the subject matter. Specialist advice should be sought about your specific circumstances.

[View Source]